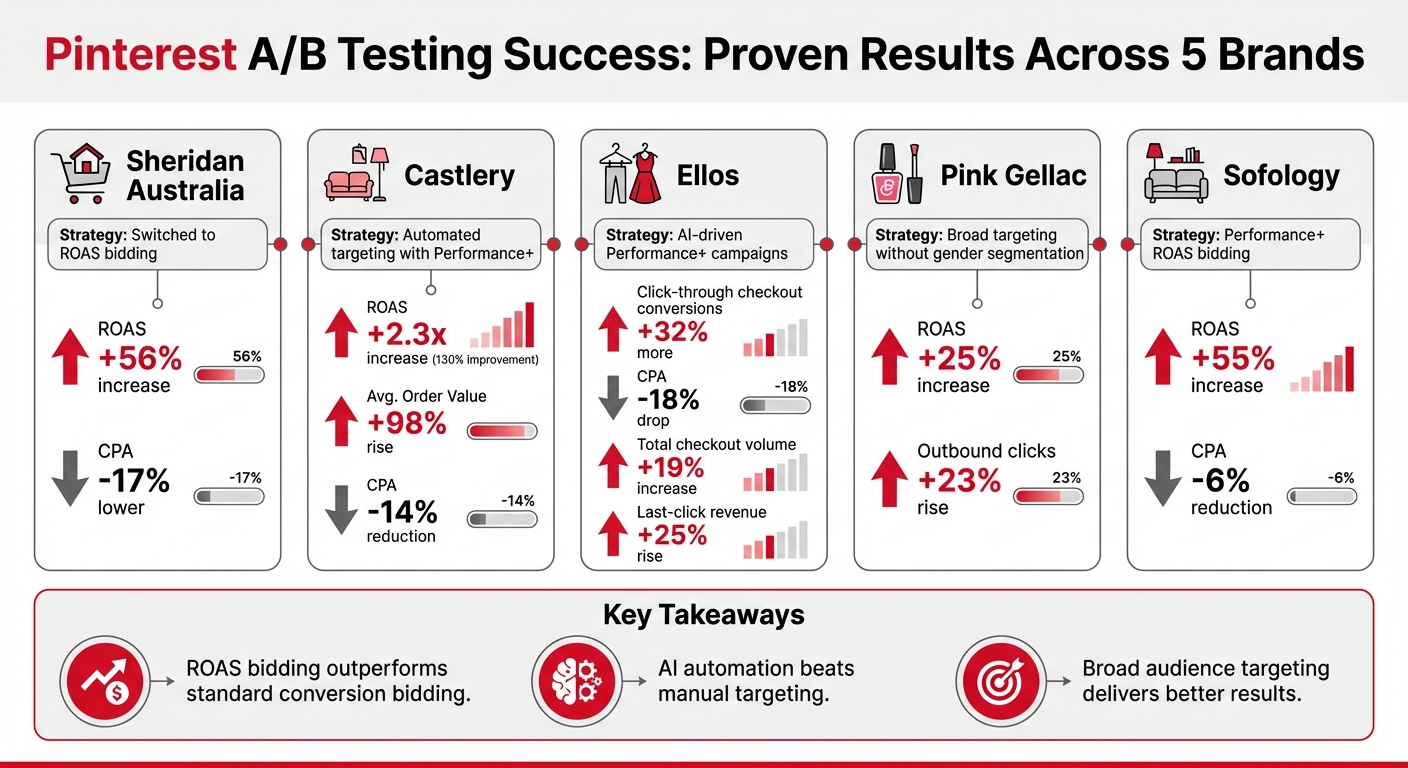

A/B testing on Pinterest ads has helped brands achieve measurable success by refining strategies and relying on data, not guesswork. Key takeaways from top PPC agencies and various businesses include:

- Higher ROAS: Sheridan Australia saw a 56% increase in return on ad spend (ROAS) by switching to ROAS bidding.

- Lower Acquisition Costs: Ellos reduced CPA by 18% through AI-driven campaigns.

- Boosted Conversions: Castlery achieved a 2.3x ROAS increase and a 98% rise in average order value using automated targeting.

- Broader Targeting Success: Pink Gellac improved ROAS by 25% without traditional gender segmentation.

Pinterest A/B Testing Results: ROAS and Conversion Improvements Across 5 Brands

The ONLY Pinterest Ads Testing Strategy You Need (€157k in 90 Days)

sbb-itb-89b8f36

Case Study Overview: Businesses That Improved Results with Pinterest A/B Testing

Four businesses successfully used Pinterest's A/B testing tools to tackle advertising hurdles and achieve measurable growth. Sheridan, an Australian homeware brand, partnered with the agency Incubeta to explore how changing bidding strategies could improve performance. The result? A 56% higher ROAS and a 17% lower CPA, showcasing the impact of a strategic approach. Pink Gellac, a nail polish brand, compared AI-driven Performance+ campaigns with its traditional gender-targeted strategy. This shift led to a 25% increase in ROAS and a 23% rise in outbound clicks.

Sofology, a UK-based furniture retailer, adjusted its focus to prioritize high-value checkout outcomes rather than just conversion volume. They achieved this by leveraging Performance+ ROAS bidding. Meanwhile, Ellos, a Nordic fashion and home retailer, conducted a six-week A/B test in Q3 2024. By comparing AI-powered Performance+ shopping campaigns with their standard setup, the team - led by Digital Media Planner Strategist Linn Bäcklin and Jellyfish Paid Social Director Mathias Zoffmann - saw 32% more click-through checkout conversions and an 18% drop in CPA.

These examples highlight how A/B testing can lead to strategic improvements on Pinterest. Let’s dive into the challenges these businesses faced before implementing these tests.

Initial Problems: Common Pinterest Ad Challenges

Before turning to A/B testing, these businesses grappled with persistent advertising challenges. Sheridan hit a growth plateau where campaigns generated sales but lacked the efficiency to boost revenue profitability. Their cost structure needed refinement to optimize returns.

Pink Gellac faced targeting concerns when considering Pinterest's AI-driven broad targeting, which doesn’t allow for gender segmentation. Historically, they focused on women, making the idea of expanding their demographic reach a tough decision. Similarly, Sofology needed to move beyond general conversion goals and prioritize checkout outcomes that directly influenced revenue.

Another common issue was inflated metrics. For example, Pinterest counts an impression when just one pixel is visible for one second, which can skew click-through rates without necessarily driving meaningful traffic. Mathias Zoffmann from Jellyfish shared this insight:

The A/B test gave us clear evidence that Pinterest Performance+ delivers superior results compared to standard shopping campaigns.

Testing Goals: Defining Success Metrics

Setting clear, revenue-focused goals was essential to overcoming these challenges. Each business defined specific objectives for their A/B tests. Sheridan and Sofology concentrated on ROAS as their key metric, aiming to maximize the value generated for every dollar spent on ads. Ellos targeted CPA, striving to lower acquisition costs while maintaining or increasing sales volume.

Pink Gellac, however, prioritized outbound clicks over traditional click-through rate metrics. Paid Social Marketer Robin Meis explained their approach:

A/B testing is crucial for optimising our campaign strategies at Pink Gellac. We make decisions on new features based on data-driven insights.

Additionally, these brands sought to determine whether Pinterest's top PPC tools for automation could outperform manual campaign setups. They tested Performance+ against traditional conversion campaigns, keeping creative assets and budgets consistent while varying bidding strategies or targeting methods.

A/B Testing Methods for Pinterest Ads

With clear testing goals in mind, businesses in these case studies used targeted A/B testing methods to identify what truly impacts performance using social media advertising strategy tools. They followed a single-variable approach, meaning only one element - such as image format, ad copy, or targeting strategy - was changed at a time, while all other factors remained constant. This method allowed them to pinpoint exactly which changes influenced outcomes. Below, we’ll dive into specific tests involving creative formats, ad copy, and targeting strategies that led to measurable improvements in ad performance.

Creative Testing: Static Images vs. Video Ads

The ad design platform Creatopy conducted a 30-day experiment in early 2022 with a $2,000 budget to compare static and video ads. Led by Head of Marketing Bernadett Kovacs-Dioszegi, the test revealed that static ads outperformed video ads across multiple metrics:

- Outbound clicks: Static ads generated 498 clicks, compared to 179 for video ads.

- Saves: Static ads earned 143 saves, while video ads only received 39.

- Time spent on site: Users spent an average of 3 minutes 6 seconds on the site after clicking a static ad, versus just 1 minute 16 seconds for video ads.

Interestingly, the video ad introduced the brand at the 11-second mark, but the average watch time was only 9 seconds. This reinforces the need to front-load your brand message in video content to capture attention early. For businesses experimenting with creative formats, tracking metrics like outbound clicks, saves, conversions, and time spent on site can provide valuable insights.

Ad Copy Testing: Awareness vs. Conversion-Focused Messages

Ad copy testing helps businesses refine messaging by comparing different styles to see what resonates at various points in the customer journey. Effective tests focus on headlines and call-to-action buttons, exploring variations like aesthetic versus explanatory copy or lifestyle-focused versus product-focused descriptions.

For example, one test might pit a short, direct headline (around 8 words) against a longer, more descriptive one (19 words). Research suggests that shorter descriptions often perform better, as they quickly communicate the value proposition. By keeping visuals and targeting consistent while varying the copy, businesses can measure success through conversion rates and engagement metrics to determine which approach drives the most action.

Targeting and Bidding: Broad vs. Narrow Audiences

When it comes to targeting and bidding strategies, businesses tested broad, AI-driven approaches against more traditional methods. For example:

-

Sheridan Australia partnered with agency Incubeta in May 2025 to compare ROAS bidding with standard purchase bidding. Using broad audience targeting through Pinterest Performance+, their Shopping ad campaign achieved a 56% lift in ROAS and a 17% reduction in cost per acquisition. Simran Kaur, Account Director, shared:

Using Pinterest's self-serve A/B testing tool helped us unlock more efficient results for our Shopping campaigns. We'll continue using ROAS bidding as our main strategy thanks to the success we've seen.

-

Pink Gellac tested Performance+ campaigns against their standard conversion campaigns, examining how AI-driven broad targeting performed without gender segmentation. To manage such complex experiments, many teams use paid media campaign automation platforms. The results? A 25% increase in ROAS and a 23% boost in outbound clicks. Paid Social Marketer Robin Meis noted:

By testing and validating the effectiveness of Pinterest Performance+, we achieved a significant increase in ROAS. This process isn't just about confirming what works - it's about pushing the boundaries of our strategy to achieve even greater results.

These results show that AI-driven broad targeting, when paired with strategies like ROAS bidding, can often surpass the performance of manually segmented campaigns.

Results and Findings: Measured Improvements from A/B Testing

Performance Metrics: Engagement, Conversions, and ROAS

A/B testing revealed measurable growth across brands by adopting AI automation and optimized bidding strategies. These changes significantly improved ROAS, lowered CPA, and increased conversions, offering a strong foundation for further insights through individual case studies.

Castlery experienced the most striking improvement in ROAS. Between May and June 2025, the brand tested Pinterest Performance+ against their manually managed Catalogue Sales campaign in Singapore. The automated approach led to a 2.3x increase in ROAS, a 98% rise in average order value (AOV), and a 14% reduction in CPA. Camille Wong, Performance Marketing Lead, highlighted the efficiency gains:

With the Pinterest Performance+ full suite, we achieved stronger results without the manual effort typically required. It enabled us to scale up while achieving a higher ROAS.

Bidding strategies also delivered standout results. Sheridan Australia, in collaboration with agency Incubeta, tested ROAS bidding versus standard purchase bidding in May 2025. The results? A 56% boost in ROAS and a 17% drop in CPA. Similarly, Sofology tested Pinterest Performance+ ROAS bidding against traditional conversion optimization, achieving a 55% increase in ROAS and a 6% reduction in CPA. Karen Grindrod, Brand Marketing Manager, shared:

Pinterest Performance+ ROAS bidding unlocked new potential for our shopping activity on Pinterest. The strong results not only boosted our performance but also reinforced our confidence in adopting new optimizations.

Nordic retailer Ellos conducted a six-week A/B test in Q3 2024, comparing Pinterest Performance+ shopping campaigns with standard ones. The results included 32% more click-through checkout conversions, an 18% improvement in CPA, and a 19% increase in total checkout volume. Third-party analytics also confirmed a 25% rise in last-click revenue. Mathias Zoffmann, Paid Social Director at agency Jellyfish, remarked:

The A/B test gave us clear evidence that Pinterest Performance+ delivers superior results compared to standard shopping campaigns. The combination of improved signals and expanded reach helped us achieve better performance across all our key metrics.

Key Lessons: What Worked and Why

The results highlight several key takeaways. Building on earlier challenges and testing goals, these findings emphasize the importance of precise A/B testing in developing high-performing ad strategies through systematic optimization.

Three factors stood out across the most successful tests: AI automation, ROAS bidding, and broad targeting. Brands leveraging Pinterest's machine learning to pinpoint high-intent audiences consistently outperformed manual segmentation approaches.

ROAS bidding emerged as a critical factor for brands focusing on revenue quality over sheer conversion numbers. For instance, when Sheridan and Sofology shifted from standard conversion bidding to ROAS bidding, they prioritized higher-value transactions rather than simply increasing purchase volumes. This approach worked best when paired with robust tracking tools - Ellos, for example, utilized the Pinterest Conversions API and maintained accurate product feeds to ensure precise measurement during the testing period.

The transition from manual to automated campaigns not only reduced workload but also enhanced performance. Castlery's 98% increase in AOV was driven by Pinterest's AI uncovering high-intent audience segments that manual targeting had overlooked.

Conclusion: Using A/B Testing with the Right Tools

A/B testing has proven its worth, with case studies showing it can boost ROAS by up to 2.3x and increase checkout conversions by 32% through refined bidding, automation, and creative testing approaches. These impressive results highlight three main strategies for success:

- ROAS bidding outperforms standard conversion bidding: This approach prioritizes return on ad spend, delivering better outcomes.

- Automation beats manual targeting: Pinterest Performance+ automation consistently delivers superior results compared to manual efforts.

- Broad audience targeting works better: Moving away from narrow demographic filters allows AI to identify high-intent audiences. For instance, Pink Gellac achieved a 25% improvement in ROAS by trusting Pinterest's AI to pinpoint the right audience.

These findings emphasize the shift from manual strategies to AI-driven methods. Tools play a critical role in this transformation. For example, Sheridan's success story showcases how Pinterest's self-serve A/B testing tool can deliver impactful results. Simran Kaur, Account Director at Incubeta, shared her perspective after Sheridan's campaign success:

Using Pinterest's self-serve A/B testing tool helped us unlock more efficient results for our Shopping campaigns. We'll continue using ROAS bidding as our main strategy thanks to the success we've seen.

To maximize performance, consider partnering with specialized agencies or platforms. The Top PPC Marketing Directory (https://ppcmarketinghub.com) is a valuable resource for finding experts who can help you transition to automated campaigns or measure critical metrics like Average Order Value (AOV) and ROAS. To streamline this data, many marketers use SuperMetrics to consolidate insights from multiple platforms. These verified partners understand Pinterest's tools and evolving features, making them an excellent resource for achieving your goals.

FAQs

What should I test first in Pinterest ads?

Start by trying out different formats to find what clicks with your audience. For instance, ads featuring lifestyle images - showing products in real-life scenarios - often grab more attention than plain product photos. You can also play around with pin layouts, like adjusting where text overlays appear or experimenting with background designs. These small tweaks can reveal which visuals get the most clicks and lead to better conversion rates for your campaigns.

How long should a Pinterest A/B test run?

A Pinterest A/B test needs to run long enough to gather sufficient data to produce results you can trust. Generally, this means keeping the test active for at least 1 to 2 weeks. The exact duration will depend on the amount of traffic and engagement your campaign generates. This timeframe helps ensure the findings are dependable and can guide your decisions effectively.

Do broad audiences and ROAS bidding work for small budgets?

Yes, broad audiences and ROAS bidding can work well, even on smaller budgets. For example, Sofology achieved a 55% higher ROAS using Pinterest Performance+ ROAS bidding. Similarly, Castlery managed to double their ROAS through automated campaigns. These success stories show that with the right strategy, impressive results are possible without requiring a massive budget.