A/B testing your landing page copy is one of the most effective ways to improve conversions. It helps you understand what works by comparing two versions of a page - Version A (original) and Version B (with changes). This approach eliminates guesswork and relies on real data to optimize performance. Here’s what you need to know:

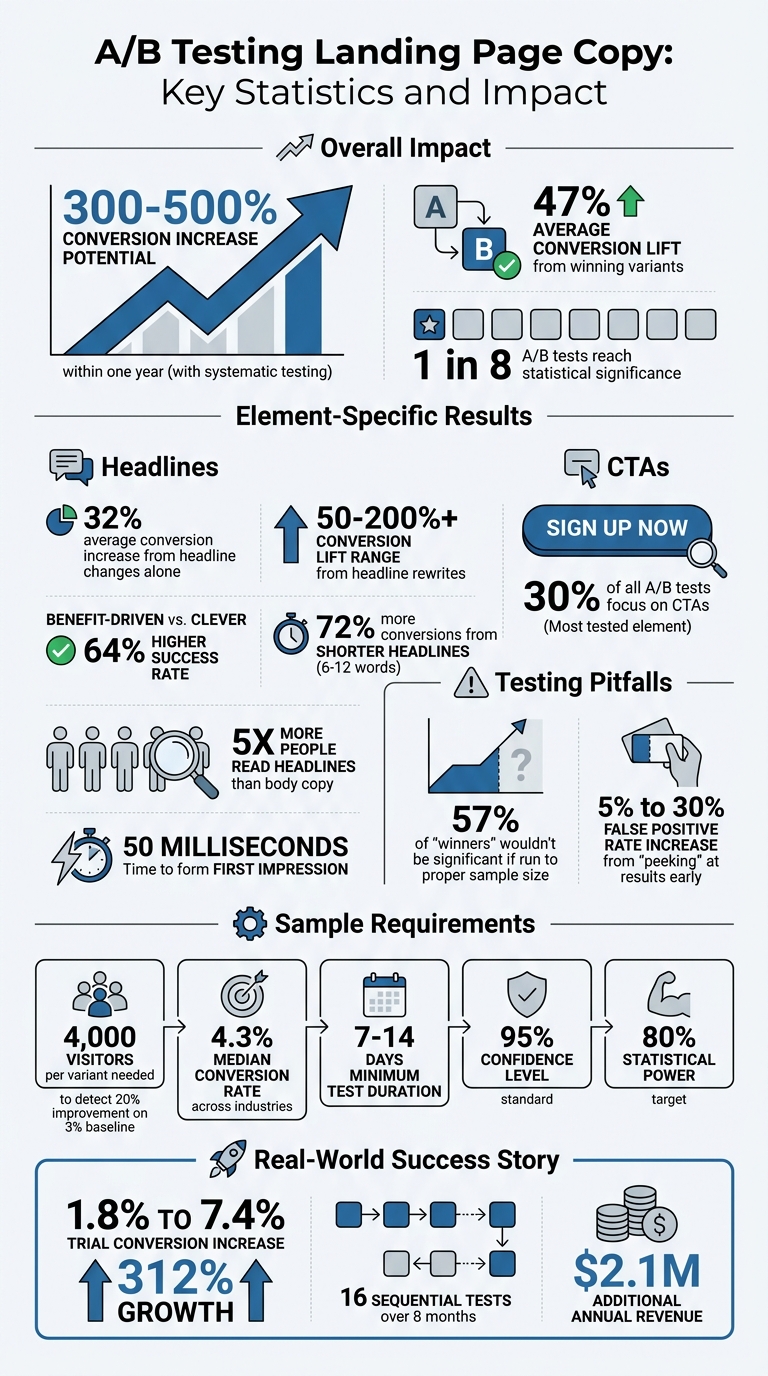

- Why it matters: Testing headlines, value propositions, and CTAs can increase conversions by up to 300-500% in one year. Even small tweaks, like rephrasing a headline, can lead to a 30% boost.

- What to test: Focus on elements that impact conversions the most:

- Headlines: Test clarity vs. cleverness, specific numbers vs. general promises, and positive vs. negative framing.

- Value Propositions: Compare benefit-driven vs. feature-driven messaging and experiment with tone and formatting.

- CTAs: Try different wording, placement, and designs. First-person CTAs like "Start My Free Trial" often perform better.

- How to test: Start with a clear hypothesis, use reliable PPC tools, and ensure you gather enough data. Avoid stopping tests too early - run them for at least 7-14 days.

Key takeaway: A/B testing is a data-driven way to refine your landing pages, boost conversions, and gain insights into your audience. Even small improvements can lead to big results over time.

A/B Testing Landing Page Copy: Key Statistics and Impact on Conversions

Which Landing Page Elements Should You A/B Test | AB Testing Landing Page

sbb-itb-89b8f36

Why Test Your Landing Page Copy

Testing your landing page copy can directly influence key metrics like conversion rates, bounce rates, and return on investment. It’s not just about changing a few words - it’s about building a system that drives consistent revenue growth.

Systematic testing delivers measurable results. For instance, winning variants in landing page tests can lead to an average conversion lift of 47%. Even something as simple as tweaking a headline can have a significant impact, with headline changes alone contributing to a 32% increase in conversions on average. In some cases, rewriting headlines has resulted in conversion lifts ranging from 50% to over 200%. These kinds of improvements can transform a campaign that’s barely breaking even into one that generates significant profits.

This kind of testing is especially critical for PPC campaign optimization, where every click comes at a cost. Ensuring your landing page copy aligns perfectly with the promise made in your ad - a principle known as "ad scent" - reduces bounce rates and makes your ad spend more effective. For example, if your ad promises "Save $142 a month", visitors should see that exact benefit highlighted on the landing page. If the page instead offers vague mentions of "saving money", you risk losing their trust. Testing helps eliminate these mismatches, ensuring your message resonates with visitors and meets their expectations.

Beyond boosting conversions, testing also uncovers valuable insights about your audience. For example, benefit-driven headlines often outperform clever or abstract ones, with some tests showing a 64% higher success rate. Additionally, shorter headlines (6–12 words) have been found to convert 72% more frequently. These findings don’t just improve individual landing pages - they can shape your overall marketing strategy, influencing everything from ad copy to email campaigns.

"Landing page conversion rate optimization done correctly starts with the copy, not the canvas." - Rob Palmer

For those looking to refine their landing page copy further, tools and resources like the Top PPC Marketing Directory (https://ppcmarketinghub.com) provide additional strategies to enhance your approach.

What to Test in Your Landing Page Copy

When it comes to fine-tuning your landing page, focus on the elements that directly impact conversions. Headlines, value propositions, and calls-to-action (CTAs) are the heavy hitters here. Testing these components systematically, often with the help of top PPC advertising tools, can reveal what drives visitors to act - and what might be pushing them away.

Headlines

Your headline is the first thing visitors notice, and it plays a huge role in whether they stick around or leave. Studies show that five times as many people read the headline as the body copy, and visitors form an impression within just 50 milliseconds. Even a small tweak to your headline can boost conversion rates by over 30%.

When testing headlines, compare clarity versus cleverness. For example, a straightforward headline like "Project Management Software for Remote Creative Teams" often outperforms something more abstract like "Your Team's New Superpower". Similarly, test whether your audience prefers feature-focused headlines (what your product does) or benefit-focused ones (what your product achieves). For instance, compare "The Only CRM with AI-Powered Lead Scoring" (feature) to "Close 30% More Deals Without Working More Hours" (benefit).

Specificity builds trust. Instead of vague promises like "Save money", use precise figures, such as "Save $142 a month". Headlines with specific, outcome-driven numbers often outperform generic claims. You can also test positive versus negative framing, such as "Grow Your Revenue by 23%" versus "Stop Wasting Money on Bad Leads".

One critical aspect is message match. If your Google Ad promises "Save $142 a month," your landing page headline should repeat that exact promise. Any mismatch between ad copy and your headline can lead to higher bounce rates and wasted ad spend.

| Headline Element | Variation A (Control) | Variation B (Challenger) | Goal of Test |

|---|---|---|---|

| Focus | Feature-led (What it is) | Benefit-led (What it does) | Identify what motivates visitors |

| Psychology | Positive Gain | Pain Point Avoidance | Test if users respond to gains or losses |

| Clarity | Clever/Creative | Clear/Functional | Minimize confusion |

| Data | General Promise | Specific Statistic | Build trust with precise details |

Value Propositions and Body Copy

Once your headline grabs attention, your value propositions and body copy need to seal the deal. This is where you explain why your offer matters. Testing different approaches here can turn guesswork into solid data. Even small improvements can make a big difference - raising your conversion rate from 3% to 3.5% means a 17% increase in leads without any extra traffic.

Experiment with benefit-led versus feature-led descriptions. While some audiences value technical details, others care more about the results your product delivers. You can also test whether emphasizing pain points (e.g., "Stop wasting money") outperforms aspirational messaging.

Formatting plays a role too. Try bullet points for skimmers versus narrative paragraphs for readers - or a mix of both. Also, test different tones: a professional, third-person voice might resonate more with one audience, while a casual, first-person tone might work better for another.

Replace vague marketing language with specific, measurable claims. For example, "Increase efficiency" is less compelling than "Cut processing time by 45%". Similarly, test clear, functional descriptions against more creative or brand-heavy copy to see which resonates more.

Calls-to-Action (CTAs)

Your CTA is where the magic - or the bounce - happens. Testing different aspects of your CTA, like wording, placement, and design, can have an immediate effect on click-through rates. In fact, CTAs are the most tested element, accounting for 30% of all A/B tests.

Start by testing value-focused language. For example, "Get My Free Quote" often outperforms a generic "Submit." You can also experiment with psychological triggers: action-focused CTAs ("Start Free Trial") versus benefit-focused ("Save Time Today") or outcome-focused ("Grow My Leads").

Voice and perspective matter too. First-person phrasing ("Start My Free Trial") can feel more personal than second-person ("Start Your Free Trial"). Placement is another key factor - CTAs in the hero section perform differently than those placed further down the page. On mobile, "sticky" CTA bars that stay visible as users scroll can boost engagement.

Don’t forget microcopy and anxiety reducers. Phrases like "No credit card required," "Cancel anytime," or privacy reassurances near the button can ease concerns and encourage clicks. Tailor the strength of your CTA to user intent: softer CTAs like "See It in Action" work better for visitors just browsing, while stronger CTAs like "Start Free Trial" are ideal for those ready to commit.

Finally, ensure your CTA aligns with earlier messaging. A mismatch here can confuse visitors and increase bounce rates.

How to Set Up Your A/B Tests

Setting up an A/B test correctly is essential. A poorly executed experiment doesn't just waste time and traffic - it can lead to decisions based on misleading data rather than actionable insights.

Set Your Goals and Hypotheses

Start by defining the variable you're testing and the outcome you expect. Focus on one key element, like a headline or call-to-action (CTA), as your independent variable. Then, decide on the primary metric (dependent variable) you'll use to measure success, such as conversion rate or sign-up numbers.

Prioritize testing elements that are likely to have the greatest impact. For instance, hero sections - headlines, subheadlines, and offer structures - often influence visitor behavior the most. While details like button colors might be tempting to test, changes like shifting a headline to highlight benefits instead of features can drive more noticeable results.

Use a structured hypothesis to guide your test. Frame it like this: "We believe that [change] will [outcome] because [reasoning]." For example: "We believe that changing our headline from 'Advanced CRM Software' to 'Close 30% More Deals Without Working More Hours' will boost sign-ups by at least 15% because benefit-driven messaging resonates better with our audience." This approach clarifies your reasoning and sets clear expectations.

Look at your conversion funnel to find where drop-offs are the highest, and prioritize those areas for testing. Plan your sample size in advance and resist the urge to check results early, as this can lead to inaccurate conclusions. Once your goals are clear, choose tools that fit your traffic volume and technical needs.

Select Your Testing Tools

Your choice of tools will depend on your resources, traffic, and the channels you're using. The right tool simplifies the process and ensures your experiment aligns with your goals.

For marketers who want to avoid involving developers, tools like Unbounce and Semrush Landing Page Builder offer drag-and-drop interfaces that make it easy to set up and test landing pages.

"Unbounce allows us to quickly turn around landing pages and run A/B tests without having to pull a developer onto a project." - Matt Gardner, Director of Customer Success at RouteThis

If you're managing more complex experiments across multiple pages or channels, platforms like Optimizely and VWO are excellent options. HubSpot Enterprise users can take advantage of built-in A/B testing tools for landing pages, CTAs, and email campaigns. For campaigns on platforms like Google Ads, Microsoft Advertising, or LinkedIn Ads, native tools allow you to test ad copy and landing page URLs directly within the campaigns.

Make sure the tool matches your traffic volume. Sites with lower traffic may need longer test durations to gather reliable data. If you're running paid campaigns, resources like the Top PPC Marketing Directory (https://ppcmarketinghub.com) offer curated tools specifically for optimizing PPC campaigns.

Calculate Sample Size and Test Duration

Tests that run too briefly or with insufficient traffic often produce unreliable results. For example, detecting a 20% improvement on a 3% baseline with 95% confidence and 80% power requires about 4,000 visitors per variant. Smaller improvements may need significantly more traffic.

Key factors to consider include your baseline conversion rate and the minimum detectable effect (MDE). Lower conversion rates or smaller expected improvements will require more visitors. Aim for 95% confidence and 80% statistical power in your tests.

Always run tests for at least a full week, ideally two to four weeks for most landing page experiments. Weekly traffic patterns can vary, so testing for only a portion of the week risks skewed results. Avoid testing during unusual periods, like major holidays, unless you're specifically targeting those times.

Set your sample size before starting and resist the temptation to stop early, even if initial results look promising.

"The more radical the change, the less scientific we need to be process-wise. The more specific the change (button color, microcopy, etc.), the more scientific we should be because the change is less likely to have a large and noticeable impact on conversion rate." - Matt Rheault, Senior Software Engineer at HubSpot

For websites with limited traffic, consider alternatives like a "ship-and-watch" approach instead of formal A/B testing. This method can still provide valuable insights without requiring a massive audience.

Metrics to Track During Testing

Once your test is live, keeping an eye on the right metrics is key to gathering meaningful performance insights.

Conversion rate should be your top priority. This metric measures the percentage of visitors who complete the action you want - whether that's signing up, making a purchase, or downloading something. It’s a clear indicator of how your copy changes are performing. For reference, the median conversion rate across industries is 4.3%.

Bounce rate is another important metric. It tells you how many visitors leave your page without interacting. If this number is high, it could mean your headline or opening lines aren’t grabbing attention or matching what visitors expected.

Click-through rate (CTR) is especially helpful for analyzing your call-to-action (CTA) buttons. It shows whether your CTA wording is encouraging users to take the next step.

Scroll depth measures how far down the page users go, giving you insight into how much of your content they’re engaging with. Ideally, scroll depth should fall between 60% and 80% of the page. Meanwhile, time on page can show whether visitors are truly engaging with the content or just skimming.

If your page includes forms, track the abandonment rate to see where users drop off. For example, e-commerce sites often face a 70% cart abandonment rate, which might point to issues in your copy or form design. Additionally, keep tabs on revenue and average order value (AOV). A higher conversion rate doesn’t always mean more profit if the quality of leads or the size of orders decreases. Staying focused on your primary metric will help you make sense of the data.

"Most A/B tests produce misleading results - not because the methodology is flawed, but because they measure the wrong outcome." - KISSmetrics Editorial

Before starting, choose one primary metric to focus on. While tracking multiple metrics can provide helpful context, having a single "dependent variable" ensures your analysis stays aligned with your main business goal.

How to Read Your Test Results

Once your testing phase is over, it's time to dive into the results. This step often trips up marketers - either by calling a winner too soon or by misinterpreting the data.

What Statistical Significance Means

Statistical significance shows that the difference between your control and variation is unlikely due to random chance. Typically, this is defined as a 95% confidence level or a p-value of ≤0.05.

"Statistical significance means unlikely due to chance, not necessarily important." - KISSmetrics Editorial

Here's an eye-opener: 57% of A/B tests labeled as "winners" wouldn't have been significant if run to the proper sample size. Cutting your test short - often called "peeking" - can inflate the false positive rate from 5% to as high as 30%.

"Stopping a test after two days because 'variant B is winning' is the most expensive mistake marketing teams make." - Liam Dunne, Growth Marketer, Discovered Labs

To avoid these costly errors, calculate your required sample size before starting the test. Stick to running it for at least 7–14 days to account for full business cycles. Keep in mind, only 1 in 8 A/B tests actually reach statistical significance - so patience is key.

Understanding statistical significance helps ensure that your results lead to actionable and reliable insights.

Compare Performance Across Variants

Once you've confirmed statistical significance, it's time to directly compare performance metrics between your control and variation. A simple comparison table can help you evaluate key data side by side:

| Metric | Control (A) | Variation (B) | Difference |

|---|---|---|---|

| Conversion Rate | 4.2% | 5.1% | +0.9% (+21.4%) |

| Bounce Rate | 58% | 52% | -6% (-10.3%) |

| Time on Page | 1:42 | 2:08 | +26 sec (+25.5%) |

| Click-Through Rate | 12% | 15% | +3% (+25%) |

Break down the data further by segmenting it by device type or traffic source. For example, a variation might underperform overall but excel with mobile users or a specific referral source. These insights can guide your next steps for optimization.

Finally, think about practical significance. Even if the results are statistically valid, ask yourself if a small lift - like a 0.5% increase in conversion rate - justifies the effort or cost of making the change. And always account for external factors, such as holiday traffic surges, technical issues, or overlapping campaigns, which could have influenced your results.

Best Practices for Continuous Testing

Once you've set up and analyzed your A/B tests, the journey doesn’t stop there. Continuous testing is the secret to long-term success. A/B testing isn’t a one-time task - it’s an ongoing process that helps refine and improve over time. In fact, companies that consistently apply systematic A/B testing often see conversion rates improve by 300% to 500% within their first year. Achieving these results, however, requires discipline and a well-thought-out strategy.

Test One Variable at a Time

When running tests, focus on one element at a time. Why? Because testing multiple changes - like a new headline, a different CTA color, and a shorter form - all at once makes it impossible to know which change drove the results.

Stick to isolating one variable per test. For example, test a new headline first. Once you’ve identified a winner, make it your new control and move on to the next variable, such as the CTA text or hero image. This step-by-step approach builds a solid foundation, allowing each test to refine your results further.

Keep Testing Regularly

Optimization isn’t just a task - it’s a mindset.

"Optimization is a mindset." - Josh Gallant, Founder of Backstage SEO

To stay ahead, adopt a continuous cycle: hypothesize, test, analyze, implement the winner, and repeat. Start with high-impact elements like headlines and value propositions before tweaking smaller details, such as button colors or font styles. And remember, disciplined testing matters more than quantity - "Three real tests a year beat thirty undisciplined ones".

Even small improvements can add up to big gains. Regular testing not only enhances numerical metrics but also strengthens the overall persuasiveness of your content. To ensure reliable data, run your tests for full-week increments (7 or 14 days) to capture differences in weekday and weekend behavior.

Use Customer Feedback

Numbers tell part of the story, but qualitative insights reveal the "why" behind user behavior. Use tools like exit surveys, user interviews, heatmaps, and session replays to dig deeper into what’s working and what’s not. For instance:

- Exit surveys on high drop-off pages can uncover barriers to conversion.

- User interviews can highlight pain points your copy should address.

- Heatmaps and session replays can pinpoint frustration points, like users clicking on non-interactive elements or stopping abruptly while scrolling.

Customer testimonials are also a goldmine for crafting more compelling headlines. These qualitative insights go beyond raw data, helping you make meaningful changes that genuinely resonate with users. By combining data-driven testing with customer feedback, your landing page can continually evolve to maximize conversions.

Conclusion

A/B testing takes the guesswork out of your strategy and replaces it with data-backed decisions that drive conversions. By systematically testing elements like headlines, value propositions, and CTAs, businesses can see measurable improvements in PPC performance. In fact, companies that commit to disciplined testing often achieve conversion boosts of 300% to 500% within their first year.

Even small wins can add up quickly. For example, one B2B software company saw its trial conversions jump from 1.8% to 7.4% - a 312% increase - over just eight months. This was achieved by running 16 sequential tests, resulting in an impressive $2.1M in additional annual revenue. That’s the impact of a data-driven approach.

To maximize results, revisit your testing principles regularly. Combine numbers from analytics with insights from user feedback, interviews, and heatmaps. This helps you uncover not just what works but also why it works, ensuring that your optimizations truly resonate with your audience. Consistent refinement is what drives long-term success.

For PPC campaigns, testing is even more critical. Your landing page must deliver on your ad’s promise. When there’s a strong message match, expensive clicks are more likely to turn into conversions. Even a small boost in your conversion rate could double your revenue without increasing your ad spend.

Want to get started? Check out the Top PPC Marketing Directory to find tools that simplify your testing process - whether it’s A/B testing platforms, statistical calculators, or landing page builders. With the right resources, turning insights into revenue becomes a whole lot easier.

FAQs

How do I choose the best copy change to test first?

When aiming to improve conversion rates, start with the elements that have the biggest impact - like the headline or CTA buttons. Testing the hero copy (your headline and subheadline) is especially effective since it plays a major role in grabbing attention and driving engagement.

Focus on one element at a time to keep your tests clear and actionable. Use performance data to guide your decisions and craft a specific hypothesis for each test. For example, prioritize testing changes to your value proposition - this can significantly influence user decisions - rather than minor details like button colors, which tend to have a smaller effect.

What should I do if my site doesn’t get enough traffic for a reliable A/B test?

If your website doesn't get enough traffic to support reliable A/B testing, shift your attention to gathering qualitative feedback. Tools like surveys, user interviews, or feedback forms can help uncover potential issues and areas for improvement. Based on this feedback, you can implement small, incremental changes and track how they impact performance over time, instead of waiting for statistical significance from traditional testing methods.

How can I tell if a “winning” variant actually improves revenue, not just clicks?

To determine if a "winning" variant actually increases revenue, it's important to monitor its effect on key metrics like revenue and retention across the entire customer journey. Don't just stop at tracking initial clicks or conversions - dig deeper. Look at how the variant performs over time and how it supports your long-term objectives.